Why most AI investment isn’t paying off

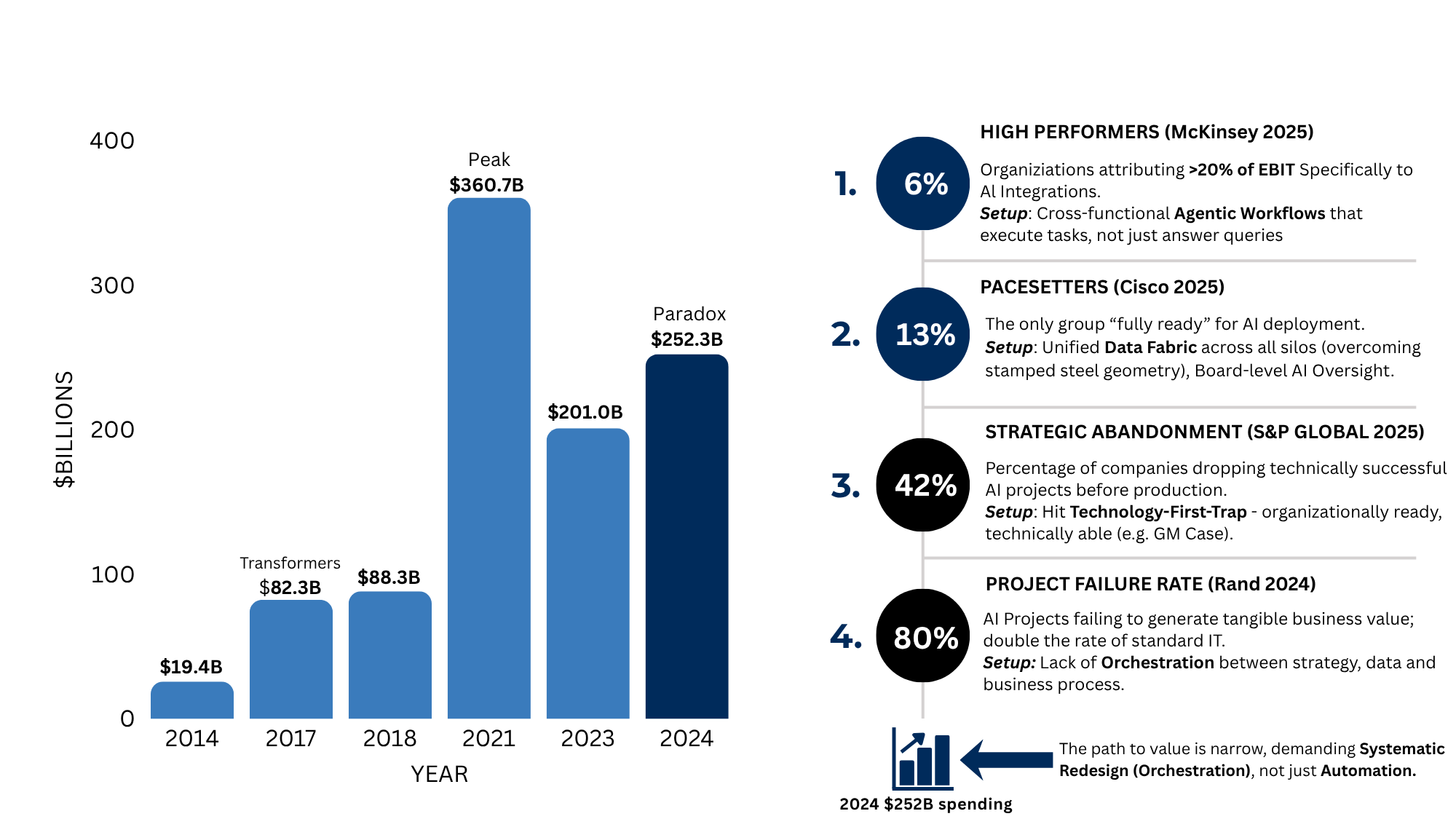

Organizations are spending more on AI than at any point in the technology’s history. Cisco’s AI Readiness Index reports that half of organizations with more than 500 employees now allocate 10% to 30% of their total IT budgets to AI, and 92% plan to increase their AI investments over the next three years.

Yet readiness scores are declining, project abandonment is rising, and nearly half of business leaders report no gains or results below expectations. McKinsey’s 2025 global survey of nearly 2,000 respondents found that just 1% of C-suite leaders describe their generative AI deployments as mature. S&P Global reports that the share of companies abandoning the majority of their AI initiatives before production rose from 17% to 42% year over year.

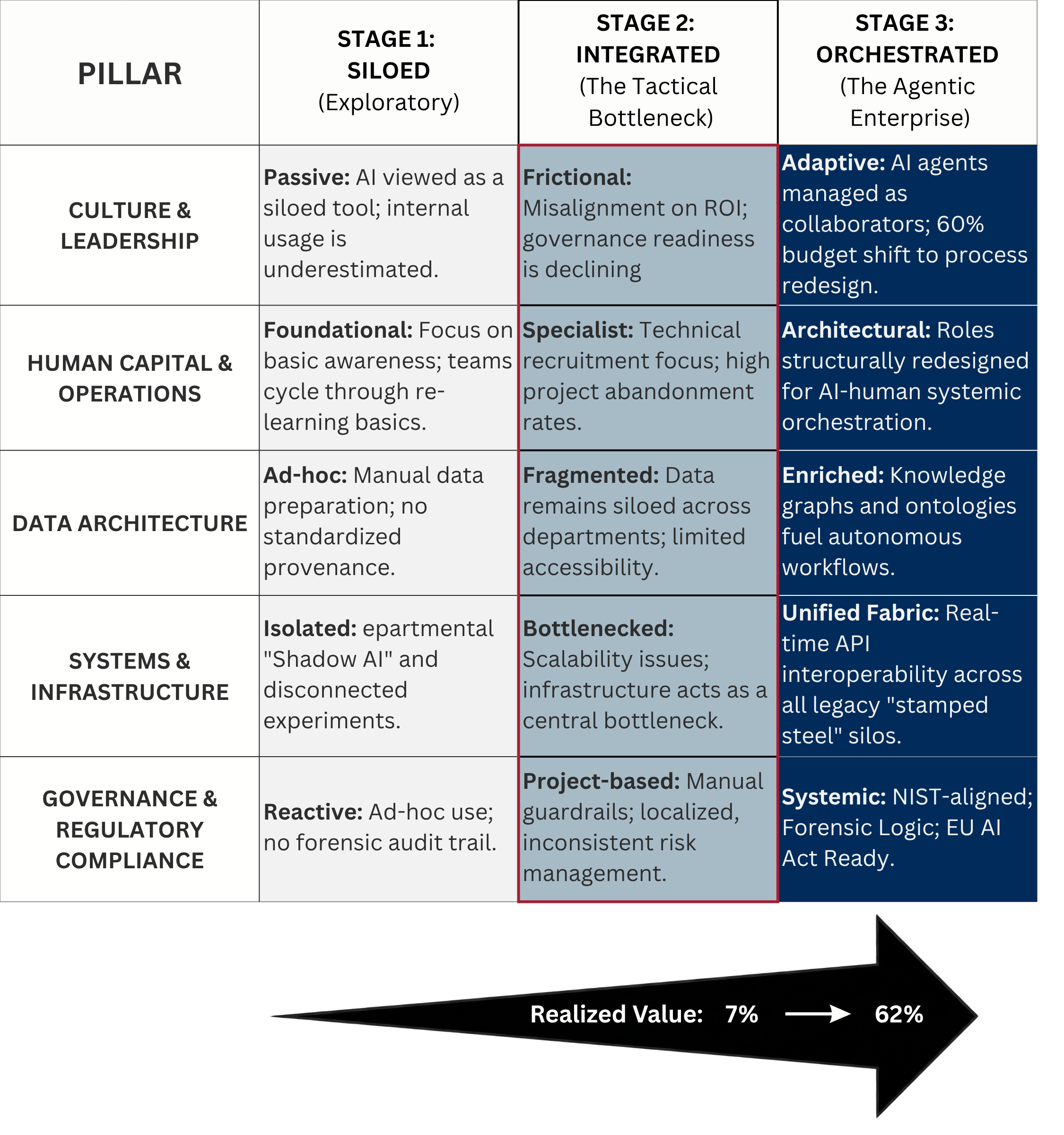

AI project failure is fundamentally an organizational learning problem — not a technology deficit.

Israeli and Ascarza describe this in Harvard Business Review as a “technology-first trap”: organizations deploy AI solutions department by department without linking them to enterprise goals, producing technically successful implementations that never reach production. The classic case: General Motors applied generative-design software to produce a seat bracket 40% lighter and 20% stronger than the original, yet the part never entered production because GM’s supply chain, built for stamped steel, could not accommodate the AI-generated geometry. The technology worked. The organization was not ready.